The Computer Industry For All Mankind Never Needed to Build

Disclosure: This reflects my personal experience and interpretation of publicly available information. It represents my views alone—not any employer or organization—and is not professional advice.

Editor's note: This is V2 of the essay, originally published on April 10, 2026, the night of the Artemis II splashdown. This revision restructures the Apple and Unix sections after further analysis of how AT&T's regulatory status affects the path by which Unix escapes institutional control. The core thesis is unchanged. The cascade is cleaner.

Fifty-three years. That's how long it took us to go back to the Moon. Apollo 17 returned in December 1972. Artemis II splashed down today, April 10, 2026, in the Pacific off San Diego. Four astronauts. Ten days. 695,000 miles. The farthest any human has ever traveled from Earth.

In the gap between those two missions, we dismantled the computing infrastructure that made the first one possible and rebuilt it from scratch. Twice. At some point, this stops being progress and starts being a renovation project that never ends.

This is a what-if essay. What if IBM never fell? It is the biggest "what if" in computing, the existential scenario that did not transpire, and these are thoughts many people in our industry have had but never spoken out loud.

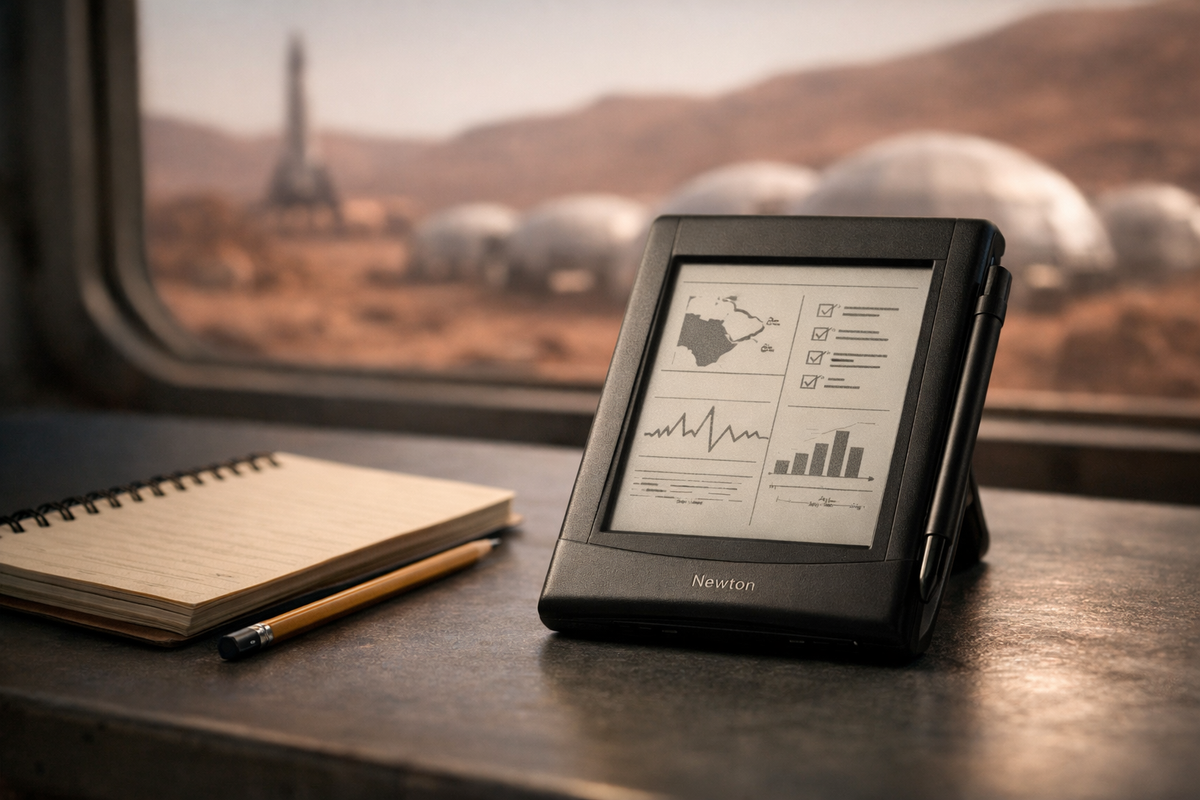

There's a throwaway line in For All Mankind that stuck with me. The show, for those who haven't seen it, is an Apple TV+ alternate-history series in which the Soviet Union beats the United States to the Moon and the space race never ends. An astronaut casually notes that a 20-year-old spacecraft they're refurbishing has less computing power than his Newton. It lands like a joke. It isn't. Notice who's making the quip: not a teenager, not a commuter. An engineer referencing a niche tool for people who work with systems. That alone tells you computing developed differently.

Most people watch that show for the geopolitics. I keep watching for the computers.

None of what follows is depicted in the show. The computer industry is a prop, not a plot element. This essay is the writer's guide that doesn't exist: the technology bible for a world the show implies but never explores. It solves for the show's shortcomings, and even the most speculative parts follow logically from the show's own premises. Think of it as a treatment for a spinoff nobody asked for, the way Star City tells the For All Mankind story from the Soviet perspective. This is the version from Armonk.

The Collapse That Enabled Everything

In our world, Apollo ends, and something breaks. Funding shifts. Urgency fades. The large-scale systems that defined computing lose their central purpose. Into that vacuum comes the personal computer, commodity hardware, distributed systems, the internet, and the cloud. We broke computing into pieces. Then we spent 40 years putting it back together.

Everything in this essay stems from one event: the space race ended. And when it did, it pierced IBM's armor.

As long as Apollo runs, IBM is indispensable. It builds the systems that put humans on the Moon. It has purpose, funding, and political cover. When Apollo ended in 1972, IBM didn't collapse immediately. But the wound is there. The urgency that justified IBM's dominance fades. The arrogance that was tolerable when IBM was running the space program becomes intolerable when it's just selling business machines. That arrogance, the pricing, the bundling, and the leasing-only model create the gap. And into that gap come the 1969 antitrust suit, the PC, the clone makers, and eventually every company and career that defines our industry.

In the For All Mankind timeline, that wound never occurs. IBM stays powerful, arrogant, and uncontested. The space race keeps running. The armor never cracks. And everything that follows in our timeline, the entire fragmentation, the entire reconstruction, never needs to happen.

A World Where the Stack Never Flattened

In the world implied by For All Mankind, the industry doesn't flatten. It stratifies and stays that way. Three layers persist.

At the top, IBM, UNIVAC, and Burroughs don't fade. They evolve into planetary-scale coordination engines, mission-critical infrastructure, and real-time, always-on compute. Not relics. The core. The center of the computing universe is Armonk, Poughkeepsie, and Fishkill, not Santa Clara and Cupertino. Silicon Valley still has its semiconductor fabs — Fairchild and Shockley predate the divergence — but it never becomes the cultural and economic center of computing. It stays a place that makes components, not a place that makes history.

Xerox is still in Stamford. HP is still in Palo Alto, but as an instrument, workstation, and printer company. Chicago's status is outsized: Motorola in Schaumburg makes the chips and runs the wireless infrastructure that connects everything. Texas is a technology powerhouse decades earlier than in our timeline, with EDS in Dallas running the systems and Texas Instruments in the semiconductor mix. The geography of American technology is the Rust Belt, the Northeast Corridor, and Texas. California is where you go for movies, not microprocessors.

There is an uncomfortable truth underneath this model, and it has nothing to do with technology. If the space race never ends and the political landscape of For All Mankind holds, the antitrust activity that shapes our computing industry largely doesn't happen. The 1956 consent decree already existed, but the 1969 antitrust suit that dogged IBM for 13 years and shaped its behavior throughout the entire PC era never gained the same traction. AT&T never gets broken up. The regulatory pressure that would force IBM to further open its patents, tolerate plug-compatible manufacturers, and eventually create the conditions for the PC never materializes with the same force.

In that world, the door opens only as wide as IBM wants it to. Every other company in this solar system doesn't compete with IBM. They exist because IBM allows them to exist and interact with its ecosystem. It's tolerance, not competition. IBM is the sun. Everything else orbits. Less Silicon Valley, more Tokugawa Japan. Thomas J. Watson occupies the same place in that world's mythology that Steve Jobs occupies in ours. John Akers, who, in our timeline, presides over IBM's decline and gets pushed out in 1993, is, in theirs, the visionary leader running the most powerful technology company on earth at the peak of its influence. Our timeline's failure is their timeline's hero. The cult of personality just orbits a different star.

A caveat before we go further. This timeline diverges from ours around 1969, and the cascading effects accelerate through the 1970s and 1980s. Some of the companies discussed below may exist in that world. Some may not. Think of it as a seating chart for a dinner party where some of the guests may not show up. I'm playing Hari Seldon with the computing industry, which is an appropriately nerdy thing to do with another Apple TV+ show's premise. The irony is that their timeline is actually easier to predict than ours. IBM stays dominant, the industry stays monolithic, the blocks are larger, the players are fewer. Psychohistory works better when the system has fewer moving parts.

Jupiter

If IBM is the sun, Motorola is Jupiter: not the center, but massive, influential, and orbited by its own moons.

Motorola becomes the dominant chipmaker, the owner of wireless infrastructure, and arguably the second-most important technology company in the world after IBM. The 68000 didn't need a consumer PC explosion. It was already the processor of choice for aerospace, defense, telecommunications, and industrial control. In a world where those markets remain the center of computing, Motorola becomes the Intel of that timeline.

The semiconductor partnership between IBM and Motorola is natural but unremarkable. IBM designs the POWER architecture for high-end systems. Motorola fabricates a scaled-down variant for mid-range use. Call it MicroPOWER or whatever IBM's naming committee comes up with. The chips are made in Fishkill. Nobody holds a press conference. It's just how the supply chain works.

But chips are only half the story. Motorola built the first commercial cell phone in 1983, dominated the pager market, and owned police, fire, and emergency communications infrastructure. In a world where wireless networking is institutional rather than consumer, Motorola controls the silicon and the airwaves. Schaumburg isn't just the chip capital. It's the communications capital. No Qualcomm or Broadcom to compete with for the standards. You don't connect to a coffee shop hotspot. You authenticate through your organization. The idea that anyone could just hop on a wireless network without institutional affiliation would seem as strange to them as walking into IBM's data center seems to us.

And Motorola is the one company IBM can't buy. Swallowing the company that makes your chips and runs your wireless infrastructure would make IBM so obviously a monopoly that even that timeline's lax regulatory environment would notice. Every other company orbits IBM. Motorola orbits alongside it.

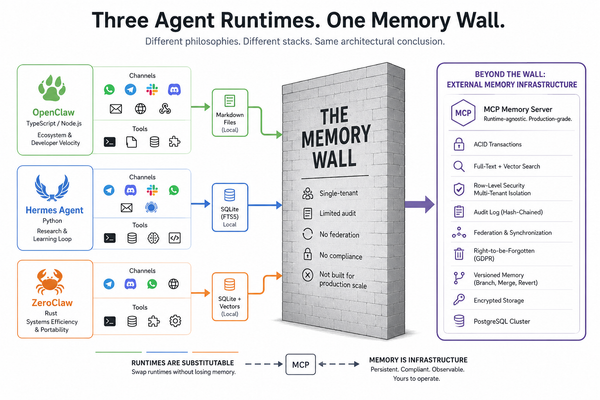

Unix Never Escapes

To understand the rest of this timeline, you have to understand what happens to Unix. Because everything downstream — Apple, the web, Linux, open source — depends on whether Unix breaks free of institutional control.

I wrote a piece for ZDNet arguing that without Dennis Ritchie, there would be no Steve Jobs. More on this guy in a bit. Ritchie and Thompson at Bell Labs created C and UNIX, the DNA of virtually every modern operating system. C begat C++, which begat Java. UNIX begat BSD, which begat Linux, which begat Android. Every iPhone runs on a descendant of work done at Bell Labs in New Jersey.

But that lineage only unfolds because Unix escapes.

In our timeline, Unix escapes through a mixture of licensing, academic leakage, legal ambiguity, and the institutional weakness that follows AT&T's breakup. BSD starts as patches to AT&T Unix at Berkeley, rewrites the proprietary code out of the system over the years, and survives the lawsuit that follows. That escape requires academic freedom, legal uncertainty, and institutional weakness.

Now remove that weakness. If AT&T stays intact and Bell Labs remains unified, Unix System V stays tightly controlled. BSD never gets far enough outside the fence to matter. Unix doesn't become a freely redistributable substrate. It stays what it was designed to be: an institutional operating system, licensed, controlled, embedded in the system that created it.

Without BSD escaping, Linux becomes far less necessary. Linux wasn't just software. It was a response to a particular historical moment: cheap personal computers, fragmented systems, and the belief that expensive institutional software could be rebuilt from below. Remove those conditions, and you don't get Linux as we know it. Linus Torvalds is a very talented systems programmer at a Finnish institution rather than the most important software developer of a generation. Nobody gets a tattoo of a COBOL subroutine (i.e., IDENTIFICATION DIVISION, which admittedly sounds like a 1980s goth band). But at least there's no Java. And no JavaScript. So it's not all bad.

Ritchie's work still matters in that timeline. C still matters. Unix still matters. But they remain institutional, licensed, and embedded. Unix runs on RS/6000s, DEC Alphas, and HP-UX workstations, but you use it because your organization licenses it, not because someone liberated it. COBOL and Fortran dominate where Unix doesn't reach: business logic on the mainframes, scientific computing and space program simulations on everything else.

And this is the domino that makes their world look so different from ours. Not one thing among many. The thing. Unix staying inside the fence changes how we collaborate, which companies survive, what Apple becomes, whether the web arrives open or institutional, and whether Linux ever needs to exist at all.

No Linux, But SHARE Scales Instead

Without Linux, without free Unix, collaboration doesn't disappear. It evolves differently.

SHARE, founded in 1955 as an IBM mainframe user group, was one of the earliest examples of organized software sharing. Contributions were identity-bound, institutionally backed, and curated. It was collaborative development before anyone called it that. In that timeline, SHARE becomes the dominant model. Not open source as we know it. Structured collaboration inside the system.

Richard Stallman still exists. He still cares about software freedom. But the trigger that radicalizes him lands differently in a world where institutional code sharing is the norm. He's the cantankerous figure at SHARE conferences insisting contributions should be more open. He writes manifestos. People respect him. Some roll their eyes. He's the movement's conscience, not its founder. An odd prophet in a world that's already halfway to his vision and won't go the rest of the way. So, actually, not that different from our Stallman.

Look at open source today. It is dominated by corporate contributors, governed by foundations, and aligned with vendor roadmaps. Kubernetes, TensorFlow, and PyTorch were all born inside corporations. The romantic image of a lone hacker in a dorm room is mostly a founding myth. We may be rediscovering SHARE. We just call it something else.

The Companies That Evolve

The biggest question mark is why Apple exists at all. In a world where IBM faces no antitrust pressure and institutional computing solves most problems, it probably doesn't. But this is an Apple TV+ show, and Apple's alternate-universe products and logos are highly visible in the production design. So we have to account for it. Wozniak still builds the Apple II because that's who Wozniak is. But the personal computer stays niche. An education tool. A creative instrument. Never the center of computing.

That niche is what saves Apple. The unsolved problem in that world is the interface. IBM builds infrastructure. DEC and HP build departmental systems. Nobody builds a great interface. Apple does. The Newton evolves because Apple has nowhere else to go. Think of it as a very expensive piece of glass that lets you see into the mainframe. Which, if you think about it, is basically what your iPhone is now. We just took a 40-year detour to get there. Apple becomes a company focused on thinking, writing, and interaction. It doesn't conquer computing. It integrates into it.

And critically, Apple never becomes a Unix company. In our timeline, Jobs founded NeXT after leaving Apple, built it on a Unix foundation, and when Apple bought NeXT in 1997, Unix became the core of modern macOS. That rescue path only exists because Unix escapes institutional control. In a world where it doesn't, there is no NeXT as we know it. There is no Darwin. No modern macOS. The BSD that begets Mach and begets NeXT is never born. Neither is the object-oriented C that becomes NeXT's foundation and Apple's future. The entire chain from Bell Labs to Berkeley to NeXT to modern Apple snaps at the second link.

Apple keeps evolving its own software lineage: the original Mac ideas, the Newton ideas, document-centric interaction, and local intelligence married to institutional back ends. The "Mac" of that world isn't a Unix workstation. It's a gorgeous, overpriced interface system designed to elegantly connect to larger institutional networks without pretending to replace them. Oh, wait.

Or Apple gets swallowed entirely. A niche interface company with great design sensibility and no scale is exactly the kind of acquisition target that a larger player in the middle tier would find irresistible. The show never actually shows us a Newton. That device comes from a single line of dialogue. What it does show is what appears to be a 2012 iMac on Dev Ayesa's desk, but it never tells us who makes it. This is the blurriness Hari Seldon can't resolve. He can map which institutions survive. He can't tell you whose logo is on the bezel.

The GUI theft narrative still happens. Jobs still visits PARC. Apple still ships the Macintosh with ideas that originate at Xerox. But in a legal landscape that favors incumbents over disruptors, Xerox litigates and wins. Apple is forced to license significant GUI intellectual property. Xerox doesn't become a platform company — that's not who Xerox is — but it becomes something like a software version of ARM: a design lab that licenses foundational interface technology to the institutional players. Xerox becomes a small, profitable, intellectually prestigious company that everyone in the industry depends on and nobody outside it has heard of.

Advanced Psychohistory

This is where the branches get harder to see. The further out from the divergence point, the more the predictions blur. But the big patterns are still visible if you squint.

Xerox may eventually be acquired, or it may go the other way and acquire. In a world where Microsoft never captures the platform and remains a tools-and-productivity-software company, Xerox buying Microsoft is a natural fit: PARC's interface research combined with Microsoft's applications suite, all licensed to institutional players. Xerox becomes the company that owns how you see and interact with data. Which is what Microsoft eventually becomes in our timeline anyway. Some outcomes are apparently inevitable. They just wear different logos. IBM acquiring Xerox is equally plausible: it gets the GUI patents, the research lab, the printer business, and the document technologies. IBM already owns the data. Owning the interface to the data and the way it gets printed is just closing the loop.

Intel never becomes the center of gravity. Without the PC creating insatiable x86 demand, the x86 architecture never achieves dominance. Microsoft never captures the platform. Dell and Compaq never existed. They are pure products of the PC era. Intel still makes chips, but it has roughly the same relevance in that world as MOS Technologies has in ours: a company that made interesting processors that most people have never heard of.

Sun Microsystems doesn't exist. It's a workstation company in a world where DEC and HP are healthy and thriving in that tier. In this reality, Sun is a me-too product with no gap to fill. Andy Bechtolsheim still builds interesting hardware at Stanford. Bill Joy still writes brilliant code at Berkeley. But the company they cofounded has no market to enter.

Silicon Graphics never forms, either. Jurassic Park still needs rendering. But those machines are HP Apollos and IBM RS/6000s. Pixar buys its rendering hardware from HP. Cisco is equally unlikely. Its core insight, that the money is in connecting the chaos, has no chaos to connect. And these are just the names we can trace. Wang. Palm. Entire lineages of technology that never begin because the conditions that create them never arrive. The further you look from the center of this solar system, the more the map dissolves into empty space.

Hewlett-Packard is the wildcard. HP is already a systems company before any of this plays out. HP-UX first ran in 1983 on Motorola 68000-based HP 9000 systems. HP is a 68000 shop from the start, which means it's already building on the architecture that wins. In a world where the middle layer survives, HP sits comfortably in the DEC tier: departmental workstations, engineering systems, HP-UX, where IBM's mainframes are overkill. HP is one of the few companies that looks recognizable in both worlds. It just doesn't have to spend three decades figuring out what it is.

The real disruptive marriage in that world may be HP and DEC. Not the hostile acquisition chain of our timeline, where Compaq devours DEC in 1998, and HP devours Compaq in 2002, each merger destroying value and culture along the way. In a world where both companies are healthy, the logic is a merger of equals to consolidate the middle layer. HP brings printing, instruments, and HP-UX. DEC brings Alpha, VMS, and the strongest engineering culture in the industry. IBM may even endorse the idea. A credible combined competitor in the middle tier makes IBM look far less like the dominant monopolist it obviously is. It's the kind of merger that actually makes sense, which is why it could never happen in our timeline. We only merge companies after we've broken them.

Control Data Corporation survives, but it may not survive independently. The second big merger of that world, after HP-DEC, is the government and defense consolidation: UNIVAC, Burroughs, and Control Data combining into a Unisys that dwarfs the one we got. In our timeline, Unisys is an also-ran. In theirs, it's the defense and space communications integrator, the company that operates the networks on which the space race runs. IBM tolerates it because even IBM can't do everything alone, and because having a credible government contractor as a separate entity is useful cover.

Computer Associates becomes the Microsoft of that timeline. Charles Wang's acquire-and-consolidate model works even better when the mainframe market doesn't shrink. CA owns Ingres, the relational database that, in our timeline, gives rise to Postgres at Berkeley, which gives rise to PostgreSQL. In that timeline, none of that happens. Ingres stays proprietary. The alternative to DB2 isn't Oracle or Postgres. It's Ingres, and Charles Wang owns it. Just CA and IBM dividing the relational database market like old men splitting a chessboard. Oracle likely doesn't exist either. Without commodity Unix hardware needing a database that IBM won't provide, DB2 wins by default. Larry Ellison is probably still rich. Maybe yachts. Actually, probably yachts regardless.

But the real database monster is IMS. IBM's Information Management System was originally developed with Rockwell for Apollo to manage the Saturn V bill of materials. In our timeline, it becomes a legacy hierarchical database that enterprises spend decades trying to migrate away from. In a world where the space race never ends, IMS never becomes legacy. It becomes the most important database on earth, tracking every component of an ongoing planetary-scale space program.

Edgar Codd still publishes his relational model in 1970. It still matters. But it doesn't dethrone the hierarchical model because it's running the space program, and nobody touches what's working. IMS evolves from tracking Saturn V components to tracking everything. It becomes the SAP of that world. Why build a separate ERP system when the database managing the most complex supply chain in human history is right there, waiting to be pointed at your business?

Cray still leaves to form his own company, because Seymour Cray was going to Seymour Cray no matter what timeline you handed him.

This is a world with essentially four pillars: IBM at the center, Motorola controlling the silicon and the airwaves, HP-DEC owning the middle tier, and Unisys running government and space infrastructure. Our world ended up with four titans, too: Apple, Google, Microsoft, and Amazon. Theirs are built on big iron and silicon. Ours are built on cloud and distributed computing. Same structure, different foundation. Four pillars is apparently what a computing industry converges on.

EDS thrives as the services layer underneath all of them. Ross Perot's insight that organizations need someone to run their computers never gets commoditized. EDS fills the role that IBM Global Technology Services fills in our timeline — the one IBM eventually spins off as Kyndryl — plus the roles occupied by Accenture, the Big Four consulting arms, and dozens of smaller IT services firms that never need to exist because Perot got there first.

If you've ever wondered what a world looks like where Ross Perot is a more consequential figure than Bill Gates, this is it.

The Network That Never Opens

Ethernet wins our timeline because it is cheap and good enough. Token Ring loses because it is expensive and built for reliability. In a world where IBM never fades, Token Ring scales. It's the networking equivalent of a four-way stop versus a traffic light. One is cheaper. The other doesn't result in collisions. 3Com, Bay Networks, Nortel — companies built on cheap Ethernet and the chaos it connects — fade from the photograph like Marty McFly's siblings.

The internet still exists. ARPANET is a government project. TCP/IP is a government protocol. But the World Wide Web doesn't arrive the same way, or at the same time. Berners-Lee invented it in 1989 on a NeXT workstation at CERN. No NeXT in that timeline, no web as we know it. Something like it probably emerges eventually — the problem is real — but it arrives later and is institutional. Built by IBM or AT&T, not by a physicist with a side project.

Without a consumer web, there are no browsers. Without browsers, no Netscape. Without Netscape, no browser war. Without the consumer web, no Google, no Amazon as we know it, no Facebook, no social media, no ad-supported internet economy. Just that sentence alone should make you sit with this for a minute. No ad-supported internet economy. Imagine it. Take your time.

What exists instead is something IBM would build. A networked information system: institutional, credentialed. Access is mediated. Identity is institutional. The network is a service, not a commons. At a minimum, you'd never have to accept a cookie policy.

Even desktops and laptops function as windows into mainframe data, not independent platforms. No "servers." No three-tier architecture. The desktop is a terminal that got smarter, not a mainframe that got smaller.

And without the consumer web, the cloud never needs to exist. It isn't innovation. It's reconstruction. In a world where fragmentation never happens, there is nothing to rebuild. Which makes the cloud sound less like the future and more like a very expensive apology.

Imagine

This is where the alternate history stops being about companies and starts being about people. And cultures.

Imagine there's no CompUSA, and no Micro Center, too. Nobody building custom rigs in a strip mall. Imagine there's no PC Magazine, no Computer Shopper, no InfoWorld, no MacWorld. Imagine BYTE magazine is still in print, the journal of record for a computing industry that thinks of itself as a profession rather than a lifestyle. A world where the most important tech publication is BYTE rather than The Verge has very different vibes.

Imagine there's no Netflix. No streaming. Blockbuster never gets disrupted. You're still arguing with your spouse about late fees. Some things are genuinely better in our timeline.

The biggest life that changes is Dennis Ritchie's. My ZDNet essay about him opens with a question: "Who was Dennis Ritchie?" Because almost nobody outside of open source knows. Not Gen Z. Not most millennials. Not most boomers or Xers. In our timeline, Ritchie is the Nikola Tesla of computing: the person whose work underpins everything, eclipsed by the Edison who gets the credit. Jobs dies in 2011, and the world stops. Ritchie dies a week later, and most people never hear about it.

In their timeline, that inversion never happens. Ritchie is the most consequential figure in computing. Not a businessman like Akers. Not a showman like Jobs. A messy genius with a cardigan and a Ph.D. from Harvard, the mad scientist who created an industry. He's their synthesis of Einstein, Hawking, and Jobs. K&R, the book he writes with Brian Kernighan, is practically a religious text. Every serious programmer has a copy. Most have two.

Jobs becomes a movie mogul. Pixar still happens. The Disney acquisition likely still happens. He just never returns to computing. There is no NeXT to acquire, so Jobs doesn't need saving. And when pancreatic cancer kills him in 2011, the world mourns a visionary filmmaker, not the man who put a computer in every pocket. His obituary leads with Pixar, not the iPhone. His closest friends are George Lucas and Steven Spielberg, not the founders of Silicon Valley companies that were never founded in this world.

Dave Cutler, who in our timeline leaves DEC for Microsoft and builds Windows NT, the foundation of every modern version of Windows, never leaves. He stays at Digital and builds the operating systems for the HP-DEC combined entity. No Cutler at Microsoft means Windows never becomes a serious enterprise OS. He's one of the most important systems programmers alive, doing exactly the work he was always meant to do, at the company he never had to leave.

Then there is Tim Cook. In our timeline, Cook spends 12 years at IBM's Personal Computer Company, leaves for Compaq, gets recruited by Jobs to Apple, transforms the supply chain, and becomes the first openly LGBTQ CEO of a Fortune 500 company. Remove the PC industry, and that sequence never forms. Cook is still at IBM, running logistics for the mainframe business. Without the PC, he's brilliant; he probably runs something significant, but he just never becomes a household name. If the HP-DEC merger happens, Cook is exactly the person you'd hire to integrate two massive supply chains. He may end up as the most important operations executive in the second tier of computing, doing the same work for a company most people outside the industry have never heard of.

I know something about this. I spent years at IBM myself, leaving in 2012. In our timeline, I was at Canon in 1994, met my wife on Prodigy while advocating for OS/2, went off to consult on Wall Street, joined Unisys in 2005, and then IBM in 2007. In the alternate timeline, Prodigy is the Facebook equivalent, and I still meet my wife there, but I'm probably already a mainframer. OS/2 doesn't exist and never fails as we know it because IBM never needs a PC operating system. There's no Wall Street consulting detour because there's no fragmented IT landscape to consult on. I'm probably at IBM from the start, and I never leave.

There's no Ziff-Davis Computing. No CMP. No ZDNet, no CNET, no Tom's Hardware. The Register never materializes because no hand exists to bite that feeds it. The personal computer didn't just create companies. It created careers, identities, and entire professional categories. Every technology journalist, every startup founder, every tech YouTuber is a product of the collapse. Remove it, and we don't disappear. We just never become who we became.

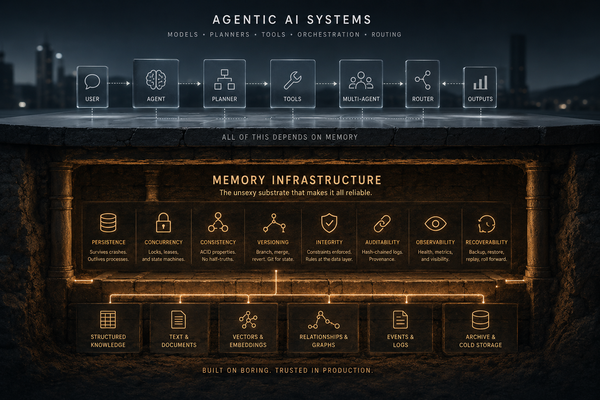

The Mainframes Are Back: Where Our Timelines Reconverge

We broke computing into pieces. Then we spent 40 years putting it back together. We may be finishing that reassembly right now.

The canonical story of computing: mainframes gave way to minicomputers, PCs, distributed systems, and the cloud. Smaller and more distributed wins. Every CTO presentation for 30 years has told this story with an arrow pointing down and to the right.

AI workloads don't follow that arc. They require massive centralized compute, specialized hardware, enormous power density, coordinated execution at scale. Hyperscale clusters, GPU superpods, training rigs costing hundreds of millions. Centralized, expensive, controlled by a few companies, and inaccessible to individuals. They are mainframes. We just don't call them that.

In the For All Mankind timeline, institutional computing never fragments. IBM and its peers build centralized systems accessed through Newtons and 68000-based desktops that are smart windows into the mainframe. In our timeline, we're converging on a model in which a handful of companies operate enormous centralized facilities that individuals access via smart glass rectangles in their pockets. The end state is starting to look uncomfortably similar. Their world was built intentionally. Ours stumbled back into it after trying everything else first. There's a Winston Churchill line about Americans always doing the right thing after exhausting all other options. The computing industry may be proving him right.

The Long Way Around

The difference between their world and ours may not be the destination. It may be the path.

They never fragmented. They stayed centralized. They built structured collaboration from the start. We fragmented everything, democratized access, built extraordinary things in the chaos, and are now re-centralizing under new constraints. We got the internet, open source, and the iPhone. They probably got stable infrastructure, fewer billionaires, and a subscription to BYTE magazine.

The consolidation pattern tells it most clearly. HP bought Compaq in 2002, and Compaq had already swallowed DEC in 1998, so HP is really reassembling the stack that collapsed. Oracle buys Sun. Dell buys EMC. IBM buys Red Hat. Every one of these reconstructs vertical integration the PC era destroyed. In their world, DEC never collapses, Compaq never forms, and if HP and DEC combine, it's a merger of equals, not a hostile-acquisition chain. There is less to consolidate because there was less to fragment.

The modern stack only exists because several pillars fell at once. IBM lost cultural dominance. Digital Equipment Corporation disappeared. UNIVAC and Burroughs merged to form Unisys and then faded into irrelevance. Centralized computing gave way to independent machines, and from that collapse came a genuinely new idea: anyone can own a computer, anyone can write software, anyone can build something new. That idea reshaped the world. But it wasn't inevitable. It required a specific sequence of institutional failures, and in a world where those institutions never fail, the idea never arrives in the same form.

Years ago, I wrote a series for ZDNet called "To the Moon," documenting IBM and UNIVAC's roles as the system integrators behind Apollo 11. The System/360s in Houston processed telemetry in real time. UNIVAC's 1230 systems handled decoding at tracking stations worldwide. These weren't peripheral contributors. They were the infrastructure. What struck me then, and strikes me harder now, watching the Artemis II coverage, is how completely that infrastructure disappeared from the story we tell about computing. We remember the Apollo Guidance Computer, the plucky little machine in the spacecraft. We forget the mainframes that made the whole thing work.

Tonight, Artemis II is splashing down in the Pacific. Four astronauts returning from ten days around the Moon, the first humans to travel there since 1972. They broke the distance record set by Apollo 13. They saw a solar eclipse from beyond the far side of the Moon.

Fifty-three years passed between the last Apollo crew and this one. In that gap, we dismantled the computing infrastructure that put them there, rebuilt it in a completely different form, and are now rebuilding it again in a form that looks remarkably like what we started with. We fragmented IBM's mainframes into PCs, reassembled them into cloud data centers, and are now concentrating them into AI superclusters that would be recognizable to the engineers who built the System/360s in Houston.

The computer industry we got wasn't inevitable. It was one path, shaped by collapse, fragmentation, and reconstruction. In another timeline, the space race never stopped, and the infrastructure I documented in that ZDNet series never became a footnote. It just kept evolving.

We took the long way around. But tonight, at least, we ended up in the same place: bringing people home from the Moon.