From 30 Years in IT to Shipping Real Fixes: How AI Changed the Contribution Game

Two merged PRs, a handful of rookie mistakes, and the quiet point where architectural thinking finally becomes actionable

Published: April 22, 2026 Read time: 6 minutes Topics: Open Source, AI-Assisted Development, Systems Architecture

Disclosure: This post reflects independent personal experimentation and my own hands-on work on personal open-source projects. It reflects only my personal views, is not professional advice, and does not represent any organization, employer, or official position.

How This Started

I'm not a software engineer. I never intended to be one.

My background is ~30 years in IT. Systems architecture, infrastructure, and content specification. I wrote system designs, managed deployments, and documented enterprise architecture. I could read code. I could spot inefficiencies. Writing production software wasn't my domain.

Until April 2026.

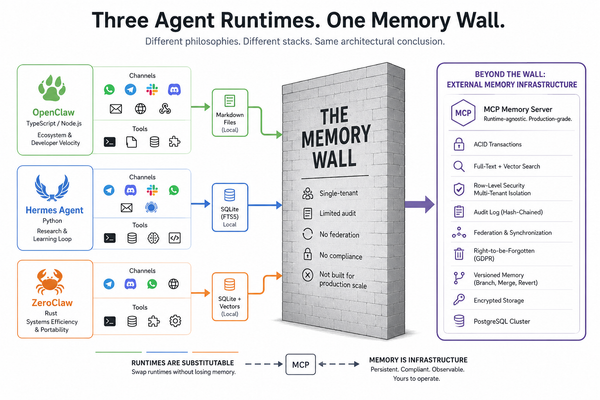

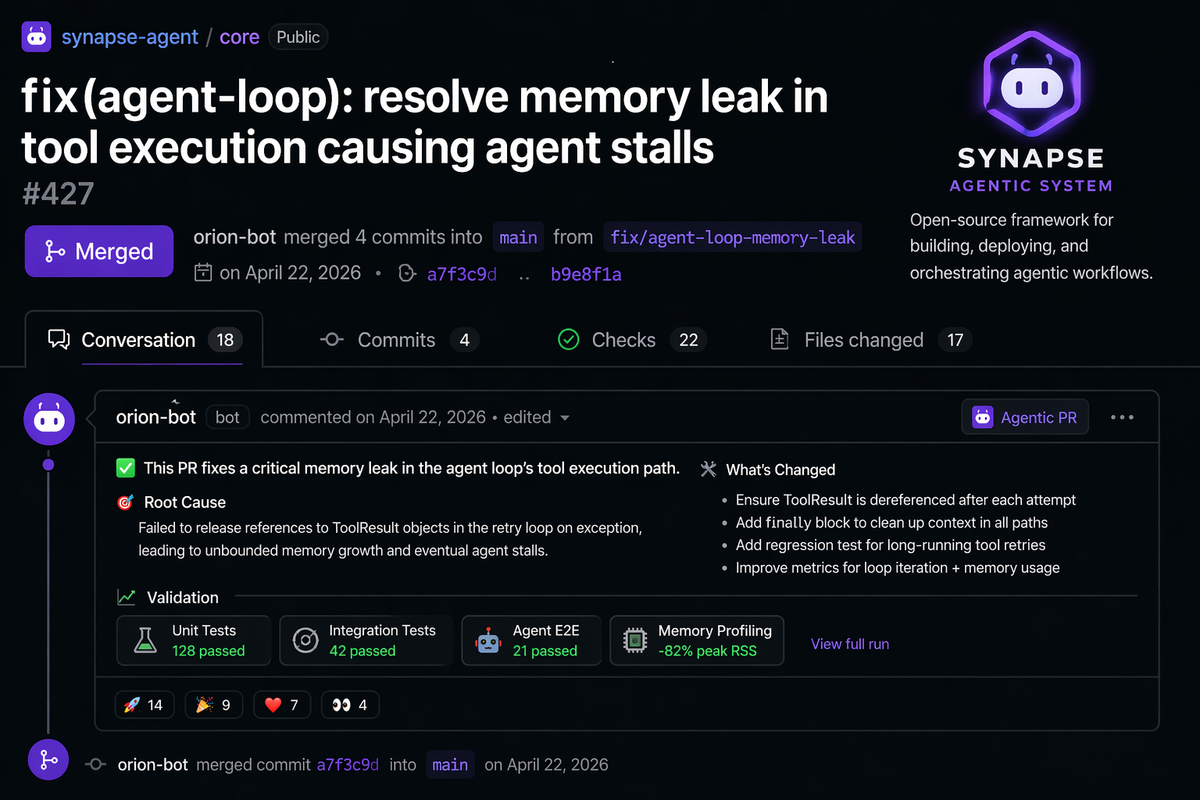

In the last two weeks, two pull requests landed in real open-source projects. ZeroClaw, a Rust agent runtime for edge devices. OpenClaw, the TypeScript gateway that orchestrates agent skill execution. Neither is a documentation fix nor a minor cleanup. Both address runtime issues that were breaking real systems for real users.

Both bugs surfaced while testing InvestorClaw, a portfolio analysis skill I built on OpenClaw. The memory substrate behind InvestorClaw is Mnemos, which shipped v3.1.0 this week under the Apache 2.0 license.

This is a sequel, of sorts, to Adventures in Extreme Vibecoding, the December 2025 piece on how this all started with a shuttered Chinese restaurant and a CSV download link buried in a Florida government website. That essay was about starting. This one is about what happens once you stop being a tourist.

How the Bugs Got Found

The bugs didn't get found by reading code. They got found by running InvestorClaw against four different agent platforms — OpenClaw, ZeroClaw, Hermes Agent, Claude Code — on three different surfaces — macOS, a Threadripper inference host, a Raspberry Pi 4. When you test the same skill across that many combinations, the ones where the runtime gives up tell you where the runtime has a bug.

The OpenClaw bug was a platform-pinning regression. A device paired on macOS couldn't be used from a Linux host — the gateway got stuck in an infinite pairing-approval loop, with no way for a non-interactive client to clear it. Root cause: the pinning check compared raw platform strings ("darwin" vs "linux") that aren't stable across OpenClaw surfaces — the CLI, Control UI, and native app each stamp different formats. The production fix was ~28 lines in handshake-auth-helpers.ts plus 54 lines of regression tests. The hard part was proving it was the gateway's bug and not my configuration.

The ZeroClaw bugs were sandbox-shaped. One was the sandbox auto-detect, silently ignoring my runtime.kind = "native" configuration and spinning up Docker anyway. The other was a Docker sandbox that had no bind mount to the workspace — every skill that needed to read a script file was looking in a container that couldn't see it.

Three bugs. One pattern. Synchronization between a gateway's expectations and the actual execution environment. These are the bugs you find by running real workloads on real hardware, not by reading the test suite. They're also the bugs that 30 years of infrastructure work teaches you to recognize on sight.

A fourth fix, in Hermes Agent, was a different shape: an asyncio lifecycle issue in which a provider's shutdown was tearing down a shared event loop that other peer providers still needed.

Different bug class, same principle — the kind of thing that only surfaces when real code runs through the runtime, not when unit tests stub out the concurrency model.

(The full technical walkthroughs are on the PRs themselves: OpenClaw PR #70224 (merged),

ZeroClaw PR #5904 (merged; closes issue #5719), PR #5905 (open — a reviewer flagged a scope gap I'm addressing), and Hermes PR #14109 (open, reviewed, awaiting merge). If you want the gateway pseudocode, the platform-string mismatch details, or the event-loop trace, they're in the PR bodies where they belong.)

One Cultural Lesson

Worth flagging. Along the way, I made a couple of procedural foul-ups on GitHub. Rookie mistakes around contributor identity and the right way to file PRs as an outside contributor. The kind of thing anyone new to modern FOSS workflow can trip on, even with 30 years in IT and nearly as many writing about open source.

The technical problem was never hard. The social ecosystem was genuinely unfamiliar. That's the real curve for people like me, and it's not a technology curve. No AI co-author shortcuts it.

What AI-Assisted Development Actually Did

The code I contributed was small — a handful of lines each. What was big was the speed from "what is this error" to "tested PR with a clear description". Hours, not weeks.

What changed: I could ask what a code path does and get an explanation rather than spend half a day reading to the same conclusion. I could ask where to add tests and get pointed to the file with the existing patterns. I could talk through the security implications before opening the PR, surface edge cases I hadn't considered, and get feedback on whether the description would land with a maintainer.

That's not AI writing the fix. It's AI collapsing the research cycle — the part of the contribution work that used to keep outside contributors out. I still had to read the code. I still had to understand what I was changing. I still had to decide whether the fix was right. But I didn't have to spend two weeks getting oriented first.

What AI could not do for me: teach me FOSS community norms. That one I had to earn the slow way.

Why This Matters

For decades, the barrier to contributing to open source was a stack of prerequisites. You needed to be a software engineer with years on language ecosystems. You needed deep familiarity with the project's architecture and review culture. You needed time — weeks of it — to read the codebase, understand the history, spot issues, and write fixes clearly.

All three of those barriers are collapsing.

You don't need to be an SWE. You need to understand the problem and have time to learn. You don't need existing context — you ask Claude to explain the codebase as you go. You don't need weeks — once you spot the issue, the fix and its tests can be drafted in hours.

What's left is understanding what's broken and caring enough to fix it. That's a much lower bar, and it's accessible to people who have spent careers understanding systems without ever writing production software.

The Value of Systems Thinking

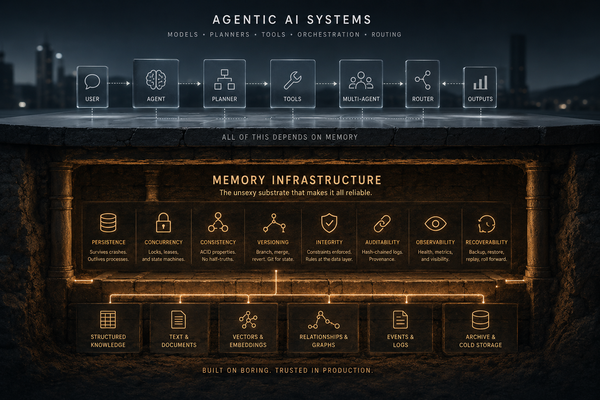

The biggest bugs in distributed systems aren't the algorithmic ones. They're the synchronization ones — the ones that emerge when configuration is inconsistent, when state is split across hosts, when users do something unexpected.

OpenClaw's metadata-upgrade issue was a state divergence between two surfaces of the same system. A pure SWE sees "platform string mismatch." Someone who has spent 20 years in infrastructure sees "this will definitely happen when users change hosts."

ZeroClaw's sandbox issues were configuration drift. A fresh engineer sees a config problem. An operator sees exactly how this happens when you run on a Raspberry Pi with inherited configs across three generations of updates.

That's what I brought. "Wait, what happens when you pair on macOS but then run the CLI on Linux?" That's a systems-architecture question, not a code question. It's the kind of question that breaks things in production, which is where the expensive bugs live.

AI-assisted development didn't make me a software engineer. It made the architectural thinking I already had actionable.

Bug reports become pull requests. Feature suggestions become experiments. Questions become answers embedded in the codebase.

The OpenClaw PR is merged. The ZeroClaw PRs are staged. Mnemos v3.1.0 shipped this week. The next skill I build will find the next bug, and the memory substrate it runs on is now open source.

Further Reading

- Mnemos: A Memory Operating System for Agentic AI — the memory substrate that came out of this work

- Adventures in Extreme Vibecoding — the December 2025 prequel on where this started

- InvestorClaw: github.com/perlowja/InvestorClaw

- Mnemos: github.com/perlowja/mnemos