The Model Nobody Expected: How InvestorClaw's Test Harness Rewrote My LLM Recommendations

Disclosure: This post reflects independent personal experimentation using publicly available documentation and pricing. All model recommendations are based on my own hands-on testing with OpenClaw in the context of agentic skill development. It reflects only my personal views, is not professional advice, and does not represent any organization, employer, or official position. Note: My employer has a licensing relationship with Groq; readers can evaluate Groq (a different company than xAI Grok mentioned in this article) independently at console.groq.com.

April 15, 2026: This article is a sequel to OpenClaw Backend Optimization: Choosing a Worry-Free LLM for Persistent AI Agents, published in February 2026. That article recommended Grok 4.1 Fast as the only viable primary LLM for persistent agent workloads, based on a manual provider-by-provider elimination on cost, context, and rate limits. That conclusion was based on provider economics. It was never tested against a production skill.

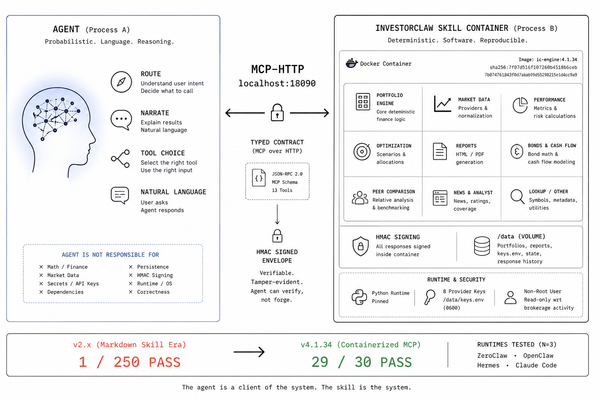

Over the past two months, I built InvestorClaw, a 16-command portfolio analysis skill for OpenClaw, and ran it through a 114-workflow test harness scoring synthesis quality across 14 dimensions on 20+ LLMs. The results overturned several conclusions for data-heavy workloads while confirming others for general operational use. This article incorporates that data.

What Changed

Provider pricing tables tell you what a model costs. They don't tell you what it does with your data. The February article was a rigorous elimination on cost, context, and rate limits. What it lacked was a way to measure whether the surviving model actually produces good output when running a real skill.

Over the past two months, I built that measurement. InvestorClaw is a 16-command portfolio analysis skill for OpenClaw, and its V6.1.2 test harness runs 114 workflow validations across 14 quality dimensions on 20+ LLMs. The full benchmark data is in MODELS.md. The companion essay on the skill's architecture is Beyond the Demo.

Why Provider Economics Weren't Enough

The February article eliminated Claude (too expensive for continuous use), GPT-5.4 (too expensive and moderate context), and everything in the nano/mini tier (the wrong capability class for complex skills). Grok 4.1 Fast was the only model standing. That elimination was correct on its own terms.

But when a model executes a multi-step portfolio analysis workflow, fetches live data from four provider tiers, computes bond analytics deterministically in Python, and synthesizes a coherent analysis from compact structured summaries, the questions that matter are different. How many metrics get cited? How many tickers get mentioned? Does the model fabricate portfolio facts? Does it maintain coherence across an eight-step pipeline? The February article had no way to measure any of that.

The InvestorClaw Benchmark

InvestorClaw is a portfolio analysis skill for OpenClaw: 16 commands, 215-position test portfolio, deterministic Python math, four-tier data provider fallback chain, FINOS CDM-inspired data models, structured guardrail enforcement, and an optional local consultation layer using Gemma 4 E4B via Ollama. The architecture is described in detail in the companion essay. What matters here is the test harness.

Version 7.x of the harness runs 114 workflow validations across a 14-dimensional quality scoring framework (QC1 through QC14). The metric that best captures synthesis quality for this discussion is QC4: metric citation density. QC4 counts the number of specific, data-grounded financial metrics (yields, durations, allocations, drawdowns, analyst ratings) that appear in the model's synthesis output. A higher QC4 means the model is doing more with the data it was given.

QC8 (fabrication detection) is the hard gate. Any model that scores QC8 > 0 (fabricated portfolio facts detected) fails the harness regardless of everything else.

I tested 20+ LLMs in both single-model (cloud-only) and hybrid (cloud operational + local Gemma 4 E4B consultation) configurations across runs IC-RUN-20260413-002 through IC-RUN-20260414-004.

An important scope note: These benchmarks measure synthesis quality on a specific workload: data-heavy portfolio analysis with structured financial output. The rankings below reflect which models best convert compact structured data into grounded, citation-dense financial analysis. They are not general-purpose agentic LLM rankings. For users running OpenClaw primarily for general operational tasks (calendar management, notification routing, simple tool-calling workflows, code generation), the original article's recommendations still hold. Grok 4.1 Fast remains the strongest general-purpose choice for persistent agent workloads in terms of cost, context, and rate-limit headroom. The revised rankings apply when your skills look like InvestorClaw: data-intensive, multi-step, synthesis-heavy, and quality-measurable.

The Results Nobody Expected

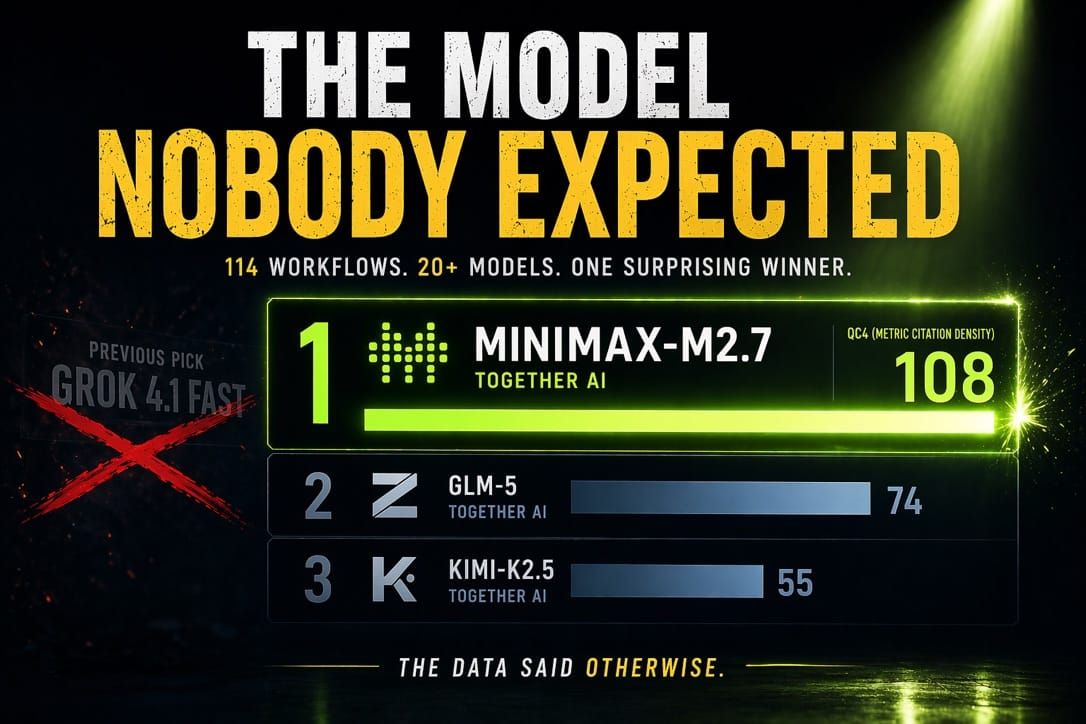

MiniMax-M2.7 Wins

The model most people haven't heard of achieved the highest synthesis density among the configurations tested.

MiniMax-M2.7, served through Together AI, achieved QC4=108 in single-model mode. For context: Grok 4.1 Fast scored QC4=39 in cloud-only mode. GPT-5.4 scored QC4=28. Gemini 3.1 Pro scored QC4=46. MiniMax-M2.7 didn't edge them out. It nearly tripled the next-best cloud-only score.

At $0.30/$1.20 per million tokens (input/output), it also delivered the best price-to-quality ratio, four times that of the next competitor. The model that won wasn't the cheapest (Groq's GPT-OSS-120B is cheaper), or the largest context (Groq's 2M dwarfs it), or the most famous brand. It was the model that best converted structured data into cited, grounded financial analysis.

Most Frontier Models Can't Even Finish the Workflow

This was the other surprise. General benchmark performance does not predict agentic skill completion.

DeepSeek-R1 outputs tool names as text code blocks rather than as function calls. Llama 4 Maverick and Scout get their tool payloads rejected by the Together AI serverless endpoint. Qwen3-235B-Thinking makes correct tool calls but takes five to ten minutes per step. GLM-4.7 fails while GLM-5 works. GPT-OSS-20B passed the harness in earlier runs but now produces malformed tool calls. Grok 4.20 (non-reasoning) fails in cloud-only mode entirely and only works with the consultation layer enabled.

The gap between "this model is smart" and "this model can execute a structured agentic skill end to end" is wide and not predictable from published benchmarks.

Consultation Helps Some Models, Hurts Others

The original article declared two-tier architecture (cloud orchestration + local consultation) "dead on arrival." The InvestorClaw data says it's conditional.

Adding the local Gemma 4 E4B consultation layer improved some models dramatically: Kimi-K2.5 gained 49% on QC4, GLM-5 gained 16%, and Grok 4.1 Fast gained 33%. But MiniMax-M2.7 dropped from QC4=108 to 97 under hybrid mode. Gemini 3.1 Pro dropped 17%. GPT-OSS-120B dropped 53%.

The operational model's synthesis style determines whether consultation data is expressed as citations or is ignored. This is not something you can predict from model specs. It required the harness to discover. Two-tier architecture works when the operational model's style is compatible with the consultation format. When it isn't, it actively degrades output.

Updated Rankings: Data-Heavy Synthesis Workloads

These rankings reflect performance on InvestorClaw's portfolio analysis benchmark. They measure synthesis quality on structured financial data, not general-purpose agentic capability. For general operational workloads, see the original article's recommendation (Grok 4.1 Fast) and the scope note above.

Single-Model (Cloud-Only, No Local GPU Required)

| Rank | Model | QC4 | Cost (In/Out per 1M) | Context | Provider |

|---|---|---|---|---|---|

| 1 | MiniMax-M2.7 | 108 | $0.30 / $1.20 | 197K | Together AI |

| 2 | GLM-5 | 74 | $1.00 / $3.20 | 203K | Together AI |

| 3 | Kimi-K2.5 | 55 | Together pricing | 262K | Together AI |

| 4 | Gemini 3.1 Pro | 46 | ~$1.25+ / ~$10.00+ | 1M | |

| 5 | DeepSeek-V3.1 | 44 | $0.60 / $1.70 | 131K | Together AI |

| 6 | Grok 4.1 Fast | 39 | $0.20 / $0.50 | 2M | xAI |

| 7 | GPT-5.4 | 28 | $2.50 / $15.00 | 272K | OpenAI |

| 8 | GPT-OSS-120B | 17 | $0.15 / $0.60 | 128K | Groq |

Four of the top five are served through Together AI. Grok, the original article's sole recommendation, lands in sixth place.

Hybrid Mode (Cloud + Local Gemma 4 E4B Consultation)

| Rank | Configuration | QC4 | Delta vs Cloud-Only |

|---|---|---|---|

| 1 | MiniMax-M2.7 + gemma4-consult | 97 | -10% (cloud-only is better) |

| 2 | GLM-5 + gemma4-consult | 86 | +16% |

| 3 | Kimi-K2.5 + gemma4-consult | 82 | +49% |

| 4 | Grok 4.1 Fast + gemma4-consult | 52 | +33% |

| 5 | DeepSeek-V3.1 + gemma4-consult | 43 | Flat |

| 6 | Gemini 3.1 Pro + gemma4-consult | 38 | -17% (cloud-only is better) |

| 7 | GPT-5.4 + gemma4-consult | 27 | Flat |

| 8 | GPT-OSS-120B + gemma4-consult | 8 | -53% (do not use hybrid) |

MiniMax-M2.7 hybrid delivers the highest absolute hybrid QC4 and is the recommended configuration when HMAC fingerprint chains and audit controls are required. But its cloud-only score is higher. Use hybrid for audit provenance, cloud-only for maximum synthesis density.

The Revised Elimination Table

The February article's elimination table scored on cost, context, and rate limits. This revision adds synthesis quality (QC4) and agentic completion (pass/fail) as measured dimensions.

| Provider/Model | In/Out per 1M | Context | QC4 (Cloud) | QC4 (Hybrid) | Verdict |

|---|---|---|---|---|---|

| Together / MiniMax-M2.7 | $0.30 / $1.20 | 197K | 108 | 97 | Recommended default |

| Together / GLM-5 | $1.00 / $3.20 | 203K | 74 | 86 | Best hybrid gains |

| Together / Kimi-K2.5 | Together pricing | 262K | 55 | 82 | Strong hybrid candidate |

| Google / Gemini 3.1 Pro | ~$1.25+ / ~$10.00+ | 1M | 46 | 38 | Cloud-only preferred |

| Together / DeepSeek-V3.1 | $0.60 / $1.70 | 131K | 44 | 43 | Budget cloud alternative |

| xAI / Grok 4.1 Fast | $0.20 / $0.50 | 2M | 39 | 52 | Enterprise/high-context only |

| OpenAI / GPT-5.4 | $2.50 / $15.00 | 272K | 28 | 27 | Consultation only |

| Groq / GPT-OSS-120B | $0.15 / $0.60 | 128K | 17 | 8 | Speed/budget tier |

Eight additional models were tested and either failed the harness outright (GPT-OSS-20B, DeepSeek-R1, Llama 4 Maverick/Scout) or could only operate in hybrid mode (Grok 4.20). Claude and GPT-5.4 Mini were excluded on the same cost/context grounds as the February article. The full blocked, degraded, and partial-pass catalog is in MODELS.md.

Configuration Profiles

Profile 1 — Cloud-Only Default (No GPU Required)

Model: together/MiniMaxAI/MiniMax-M2.7

The recommended starting point for most users running data-heavy skills. Highest synthesis quality of any single-model configuration. No local GPU, no Ollama endpoint, no infrastructure overhead.

{

"models": {

"providers": {

"together": {

"baseUrl": "https://api.together.xyz/v1",

"api": "openai-completions",

"models": [

{

"id": "MiniMaxAI/MiniMax-M2.7",

"name": "MiniMax M2.7",

"contextWindow": 197000,

"maxTokens": 16000

}

]

}

}

},

"agents": {

"defaults": {

"model": {

"primary": "together/MiniMaxAI/MiniMax-M2.7"

}

}

}

}

Monthly cost estimate (moderate usage, 50M input / 12.5M output): ~$30

Profiles 2-4

Profile 2 (Hybrid with audit controls): Same operational model as Profile 1, plus local Gemma 4 E4B via Ollama for HMAC fingerprint chain and verbatim attribution. Requires ~10 GB VRAM. Use when audit provenance is required, not for maximum synthesis density (cloud-only QC4=108 is higher than hybrid QC4=97).

Profile 3 (Budget/Speed): groq/openai/gpt-oss-120b at $0.15/$0.60 per million tokens, 500 tok/s inference. 128K context limits this to small- to medium-sized portfolios. Do not use GPT-OSS-20B (fails the harness).

Profile 4 (Enterprise/High-Context): xai/grok-4-1-fast with local Gemma 4 E4B consultation. The only model in the 2M context tier. Use when portfolio size or session length exceeds MiniMax-M2.7's 197K window. Cloud-only QC4=39 is mid-tier; consultation is required (hybrid QC4=52).

Full JSON configurations, .env settings, and hardware guidance for all four profiles are in the InvestorClaw README.

Context Window Still Matters

The original article's argument that the context window is stability insurance remains valid. For InvestorClaw's 215-position portfolio in compact mode, all tested models have adequate headroom (the benchmark uses 17-34K tokens). The context ceiling becomes the decisive factor at scale:

| Scenario | Estimated Tokens | Fits 128K? | Fits 197K? | Fits 2M? |

|---|---|---|---|---|

| 215 holdings, compact (benchmark) | ~17-34K | Yes | Yes | Yes |

| Raw W1 output, non-compact | ~72K | Yes | Yes | Yes |

| Full enriched session (215 symbols) | ~80-120K | Tight | Yes | Yes |

| Large portfolio, non-compact (500 holdings) | ~300-400K | No | No | Yes |

| Enterprise portfolio + long session (1000+) | ~1M+ | No | No | Yes |

For most personal-scale users running compact mode, MiniMax-M2.7's 197K is more than sufficient. Grok's 2M advantage becomes real only at enterprise scale or in extended multi-turn sessions where accumulated history risks truncation.

Local Inference: Validated, Conditional

The original article covered local inference as a theoretical consultation option. InvestorClaw's production deployment validates it with data.

Gemma 4 E4B runs at approximately 65 tok/s on a 24GB GPU via Ollama, producing structured per-symbol analyst summaries that are HMAC-fingerprinted and passed to the cloud operational model as verbatim quoted material. The enrichment layer produces a 10-15x step change in information density for models compatible with the consultation format.

The key finding that the original article couldn't have predicted: consultation compatibility is model-dependent. The same consultation layer that lifts Kimi-K2.5 by 49% degrades GPT-OSS-120B by 53%. The recommendation is no longer "add local consultation if you have the hardware." It's "add local consultation if you have the hardware and your operational model benefits from it." Check the hybrid benchmarks in MODELS.md before investing in a local GPU for this purpose.

The hardware guidance from the original article holds: 10GB of VRAM minimum for the Gemma 4 E4B, an RTX 3080-class or better GPU, or Apple Silicon with 32GB+ unified memory. The economic argument also holds: for most users, the cloud-only path with MiniMax-M2.7 delivers better synthesis quality than hybrid mode at a lower total cost and with zero hardware investment.

The Model That Still Doesn't Exist

The original article's most durable conclusion was that no LLM product category is deliberately designed for personal, persistent-agent use. That remains true. The requirements list is the same:

High context. 1M minimum, 2M preferred. Compaction is a failure mode, not a feature. High throughput for large inputs without rate-limit walls. Fast response time. Balanced parameters for orchestration, structured tool calling, schema adherence, and multi-step chaining. Resource-efficient at an agentic scale. Priced for continuous execution at $25-100 per month.

What changed is that Together AI's model catalog, particularly MiniMax-M2.7, comes closer to meeting those requirements than anything available when the original article was written. The synthesis quality gap has closed. The context gap hasn't. A model with MiniMax-M2.7's synthesis capability and Grok's 2M context window, priced for continuous personal use, would own this market.

That model still doesn't exist. But the bar for what counts as "good enough" has moved substantially since February.

The Platform Question, Revisited

The original article spent significant space on the xAI platform risk because Grok was the sole recommendation. With MiniMax-M2.7 via Together AI as the new default, users who prefer to avoid xAI can now do so without a synthesis quality penalty.

For users who do use Grok (Profile 4, enterprise/high-context), the platform considerations from the original article remain relevant. xAI's operational notes apply: prepaid credits with a $0 invoiced limit by default (set a manual limit before deploying unattended agents), and native web search tools at $0.005/call.

The broader point: single-provider dependency is less of a concern when the recommended configuration uses Together AI, which serves models from multiple independent labs (MiniMax, Zhipu, Moonshot, DeepSeek). Provider diversification happens naturally when the best models are hosted on an aggregation platform.

Conclusion

These are not competing recommendations. They serve different workloads. For general-purpose persistent agent workloads (scheduling, notifications, tool routing, code generation), the February article's answer still stands: Grok 4.1 Fast, on cost, context, and rate limit headroom. For data-heavy skills that produce structured synthesis output (portfolio analysis, financial reporting, anything where you can measure whether the model did something useful with the data), MiniMax-M2.7 via Together AI, by a wide margin.

The model that won wasn't the cheapest, the largest, or the most famous. It was the one that best converted structured data into grounded analysis. The consultation layer that was declared dead turned out to be conditional, not broken. Models that looked viable on paper failed to complete the workflow. None of this was predictable from provider pricing tables. All of it was discoverable with a harness.

One caveat: this is a snapshot. These benchmarks were run April 13-14, 2026. Models that fail today could work next month. Models that win today could be displaced by a new release next week. GPT-OSS-20B passed the harness in earlier runs and now fails. Grok 4.20 went from degraded to passing between test cycles. The rankings are evidence, not permanent truth. The harness is the durable asset. The rankings are what it produced on these dates.

The full benchmark data is public in MODELS.md. The skill is Apache 2.0 on gitlab.com/perlowja/InvestorClaw. The architecture essay is Beyond the Demo14-dimensional.

If you're running data-heavy skills on OpenClaw today: Profile 1, MiniMax-M2.7, Together AI.

API Key Storage

Keys are stored per-agent at ~/.openclaw/agents/main/agent/auth-profiles.json:

{

"version": 1,

"profiles": {

"together-main": {

"type": "api_key",

"provider": "together",

"key": "YOUR_TOGETHER_AI_API_KEY"

},

"xai-main": {

"type": "api_key",

"provider": "xai",

"key": "xai_YOUR_XAI_API_KEY"

},

"groq-main": {

"type": "api_key",

"provider": "groq",

"key": "gsk_YOUR_GROQ_API_KEY"

}

},

"order": {

"together": ["together-main"],

"xai": ["xai-main"],

"groq": ["groq-main"]

},

"lastGood": {

"together": "together-main"

},

"usageStats": {}

}

Ensure restricted permissions:

chmod 600 ~/.openclaw/agents/main/agent/auth-profiles.json

Switching Configurations

Edit ~/.openclaw/openclaw.json, then:

openclaw gateway stop

openclaw gateway start

openclaw channels status

No retraining required. Configuration changes apply immediately.